Let’s be honest. When we hear “real-time collaboration,” our minds jump straight to Google Docs. You know the scene: multiple cursors blinking, text appearing as if by magic, that satisfying little avatar bubble showing who’s typing what. It’s become the baseline, the expected feature. But here’s the deal—true collaboration in modern software is so much more than just editing the same sentence at the same time.

Building real-time collaborative features beyond basic text editing is about creating a shared, dynamic space. It’s about presence, context, and coordinated action. It transforms a tool from a solitary utility into a living workspace. And honestly, that’s where the real magic—and the real challenge—begins.

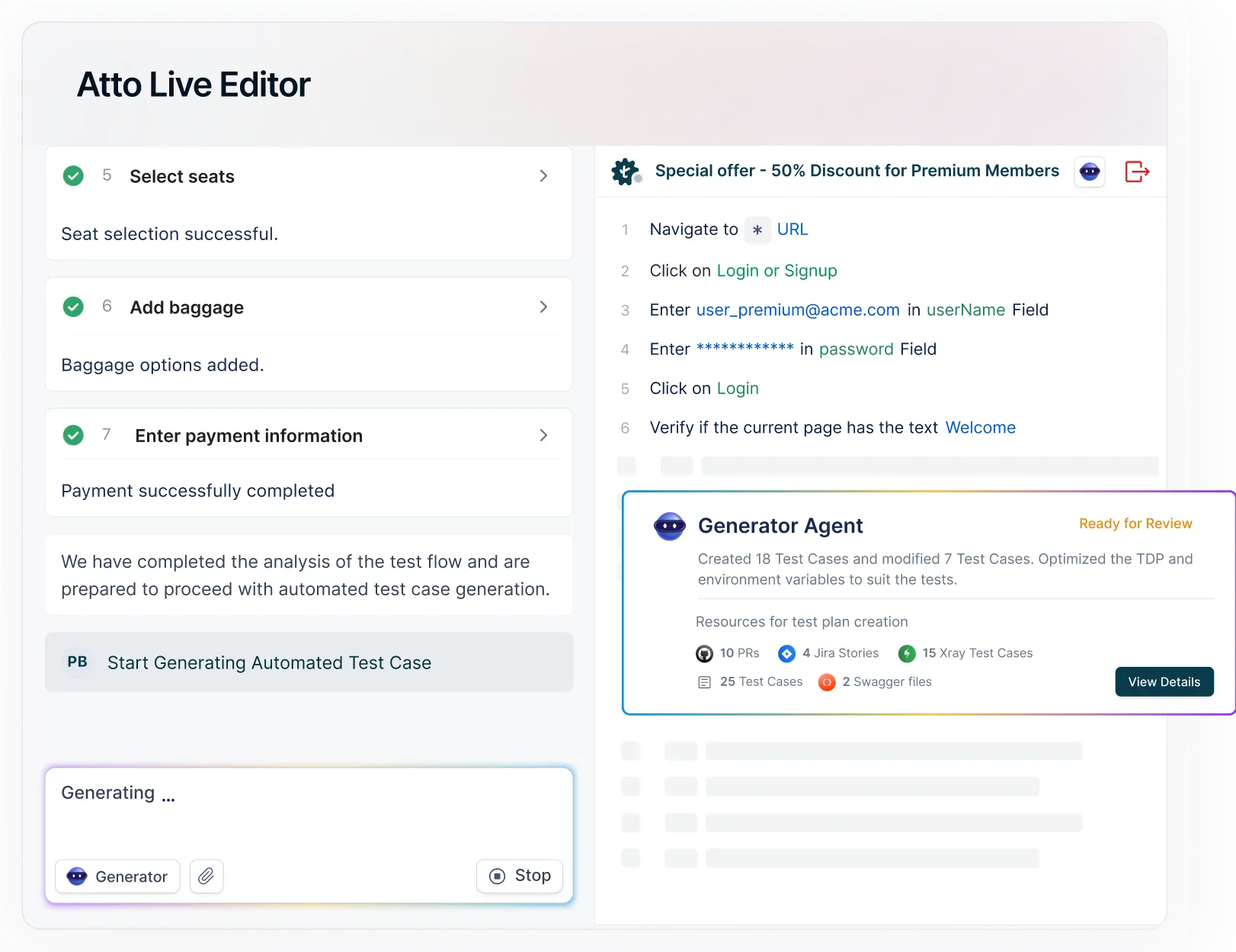

Why Stop at Text? The Next Frontier of Collaboration

Sure, synchronized text is a great start. But think about the friction in most complex projects. It’s not about writing a paragraph together. It’s about aligning on a design mockup, interpreting a dataset, mapping out a process, or even just knowing why someone just moved a task card on a board. The friction lives in the gaps between our tools and our intentions.

That said, the next frontier is about closing those gaps. It’s about making the entire environment collaborative, not just the text box. This shift requires a different mindset. You’re not just syncing characters; you’re syncing state, intent, and awareness across a myriad of interactions.

Core Pillars of Advanced Real-Time Collaboration

To move beyond the basics, you need to architect around a few key pillars. These aren’t just features; they’re foundational layers that make everything else feel seamless and, well, human.

- Presence & Awareness: This is the “who’s here and what are they doing?” layer. It goes beyond a name in a sidebar. It’s live cursors, selection highlights, viewport tracking (seeing which part of a canvas someone is looking at), and active microphone indicators. It creates a sense of shared space, like hearing someone rustle papers in the room next to you.

- Coordinated Action on Complex Objects: Text is linear and relatively simple. Now imagine syncing edits on a vector graphic, a 3D model, a musical score, or a complex spreadsheet formula. This requires conflict resolution strategies that are semantic—understanding that rotating an object is different from changing its color—and merging those actions intelligently.

- Contextual Communication: Comments and chat are good. Comments and chat attached to a specific object, at a specific time, in a specific state are transformative. Think of a video editor where a comment is pinned to frame 243, or a design file where feedback is tied directly to a layer, surviving even if that layer is moved later.

- Shared Context & Telemetry: This is the subtle, often invisible layer. It means everyone sees the same data, the same filters applied, the same version of a linked asset. It’s ensuring that when you reference “the latest sales chart,” everyone is literally looking at the same image.

Practical Features That Feel Like Magic

Okay, so pillars are great. But what does this actually look like in practice? Let’s dive into some concrete features that signal a mature, thoughtful collaborative platform.

1. Multiplayer Canvases & Design Tools

Tools like Figma have set a high bar here. It’s not just about seeing each other’s cursors on a static image. It’s about live, simultaneous editing of vector points, watching someone drag a component from a library, seeing their viewport pan and zoom in real-time. The collaboration is the environment itself, not a layer on top of it. The pain point of “sending a static PNG back and forth” simply evaporates.

2. Collaborative Data Workflows

This is a big one for business apps. Imagine a dashboard where multiple analysts can filter, sort, and manipulate a dataset together. You see a colleague highlight a cluster of outlier data points. You watch them build a chart, and you can instantly start adding annotations. The “state” of the analysis—filters, selected rows, visualizations—is shared, creating a single source of truth for the investigation.

3. Synchronized Media Playback & Annotation

For video, audio, and presentation teams, this is a game-changer. Live, synchronized playback where hitting “pause” stops the video for everyone. Adding comments that are timestamped and, crucially, remain in sync even if the video is edited later. It turns a linear review process into a simultaneous conversation, saving countless hours of “go to 1:23, no, 1:24… okay, there!”

| Feature Area | Basic Collaboration | Advanced Collaboration |

| Feedback | Comments in a sidebar | Contextual pins, threaded replies on objects, resolved status |

| Navigation | Separate views | Follow mode, viewport broadcasting, “go to where I am” |

| Conflict Resolution | Last edit wins on text | Operational Transformation (OT) or CRDTs for complex objects, intent-aware merging |

| Presence | Avatar list | Live cursors, selection highlights, active tool/brush indication |

The Inevitable Hurdles (And How to Clear Them)

Building this stuff is hard. Like, really hard. The technical architecture shifts from a simple request-response model to a continuous, state-syncing mesh network. You’re dealing with latency, offline scenarios, and the dreaded “merge conflict” on a scale far more complex than a paragraph.

You’ll wrestle with questions like: How do you handle a user on a slow connection? What happens when two people try to delete the same component and change its parent at the same exact millisecond? The solutions often lie in data structures like CRDTs (Conflict-Free Replicated Data Types) or algorithms like Operational Transformation—which are fascinating but, you know, not exactly light weekend reading.

And beyond the tech, there’s the human factor. Too much presence can feel invasive. A screen jumping around because someone else is navigating can be nauseating. The key is giving users control—a “follow” toggle, the ability to hide cursors, to mute notifications. The best collaborative features feel helpful, not hectic.

Where Do We Go From Here? The Human Layer

So, what’s next after synchronized canvases and data? The frontier is becoming less about the objects and more about the human layer on top. Think integrated, lightweight voice chat that starts automatically when you join a document—like walking into a meeting room. Think AI assistants that can summarize the changes made while you were offline, or translate collaborative actions into project management updates automatically.

The goal, ultimately, isn’t to mimic being in the same room. It’s to create something better than being in the same room. To have the spontaneity and connection of physical presence, but paired with the superpowers of digital context—perfect memory, instant calculation, and a shared, malleable reality.

Building real-time collaborative features beyond text editing is, in the end, an exercise in empathy. It’s about understanding how people actually work together—the glances, the pointing, the simultaneous “aha!” moments, the quiet parallel work. It’s about building bridges between minds, not just syncing databases. And that, well, that’s a product vision worth coding toward.